Generating Referring Expressions with Symbolic and Iconic Elements

In this project, Ting Han (together with Sina Zarrieß) explore multimodal ensembles consisting of utterances (language) and sketches, as a means to refer to concrete objects. We augmented an existing sketch dataset with verbal descriptions, and trained neural generation models on that.

Abstract

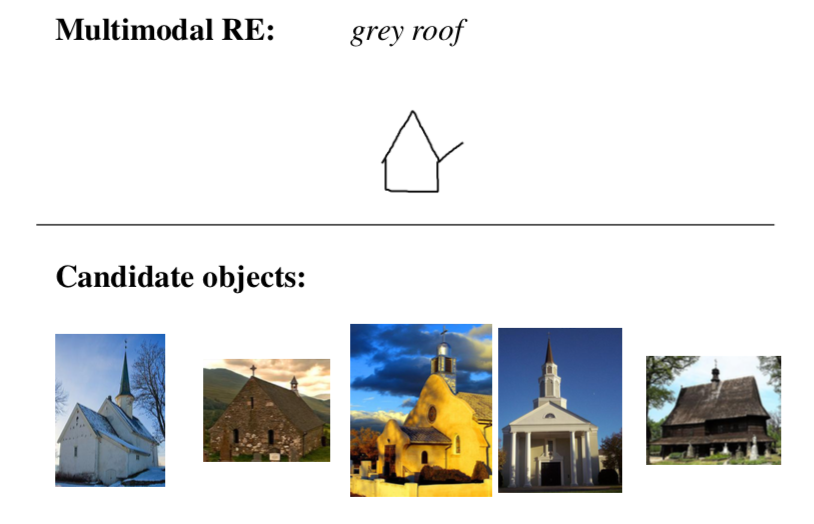

Verbal utterances are not the only way of expressing communicative intentions: when something is difficult to describe with words (e.g., the exact shape of a house), speakers often intuitively resort to a range of non-verbal resources, such as hand-drawn sketches, where iconic elements are deployed to achieve communicative success. In these cases, verbal utterances and sketches form multimodal referring expressions (RE) to enable a recipient to idenity target objects. We are interested in enabling robots/virtual agents to generate such multimodal referring expressions (M-REG) and facilicate human-agent communication.

Methods

We collected a Multimodal object description corpus [2] by augmenting the hand-drawn sketch and image pairs in the SketchyDB with verbal descriptions. This corpus makes it possible to investigate the interplay between iconic (sketch) and symbolic (natural language) semantics in object descriptions. Using a model of grounded language semantics and a model of sketch-to-image mapping, we evaluated the joint contributions of language and sketches with an image retrieval task. We show that adding even very reduced iconic information to a verbal image description improves the performance of the image retrieval model.

With the collect data, we trained two M-REG models and evaluated these models in unimodal and multimodal object identification tasks conducted via crowdsourcing experiments. The results show that partial hand-drawn sketches improve the effectiveness of verbal REs. Interestingly, the language generation system that performs worse in the unimodal condition due to more ambiguous REs leads to better results in the multimodal condition.

Publications

(Han et al. 2018) (Han and Schlangen 2017) (Han, Hough, and Schlangen 2017)

-

Learning to describe multimodally from parallel unimodal data? A pilot study on verbal and sketched object descriptions Proceedings of the 22nd Workshop on the Semantics and Pragmatics of Dialogue (AixDial) 2018 [PDF]Details

BibTeX

@inproceedings{Han-2018, author = {Han, Ting and Zarrieß, Sina and Komatani, Kazunori and Schlangen, David}, booktitle = {Proceedings of the 22nd Workshop on the Semantics and Pragmatics of Dialogue (AixDial)}, location = {Aix-en-Provence (France)}, title = {{Learning to describe multimodally from parallel unimodal data? A pilot study on verbal and sketched object descriptions}}, year = {2018}, topics = {}, domains = {}, approach = {}, project = {} } -

Natural Language Informs the Interpretation of Iconic Gestures. A Computational Approach The 8th International Joint Conference on Natural Language Processing. Proceedings of the Conference. Vol. 2: Short Papers 2017 [PDF]Details

BibTeX

@inproceedings{Han-2017-3, author = {Han, Ting and Hough, Julian and Schlangen, David}, booktitle = {The 8th International Joint Conference on Natural Language Processing. Proceedings of the Conference. Vol. 2: Short Papers}, isbn = {978-1-948087-01-8}, location = {Taipei}, pages = {134 -- 139}, title = {{Natural Language Informs the Interpretation of Iconic Gestures. A Computational Approach}}, year = {2017}, topics = {}, domains = {}, approach = {}, project = {} } -

Draw and Tell. Multimodal Descriptions Outperform Verbal- or Sketch-Only Descriptions in an Image Retrieval Task The 8th International Joint Conference on Natural Language Processing. Proceedings of the Conference. Vol. 2: Short Papers 2017 [PDF]Details

BibTeX

@inproceedings{Han-2017-4, author = {Han, Ting and Schlangen, David}, booktitle = {The 8th International Joint Conference on Natural Language Processing. Proceedings of the Conference. Vol. 2: Short Papers}, isbn = {978-1-948087-01-8}, location = {Taipei}, pages = {361 -- 365}, title = {{Draw and Tell. Multimodal Descriptions Outperform Verbal- or Sketch-Only Descriptions in an Image Retrieval Task}}, year = {2017}, topics = {}, domains = {}, approach = {}, project = {} }